Recovering from Spectre: JavaScript changes

– The web developer’s perspective –

Popular browsers have implemented temporary mitigations for recently discovered hardware vulnerabilities that might break existing JavaScript applications. We took a look at the limitations being introduced by the upcoming updates to see why they were put in place, how they affect existing applications and what can be done to work around them.

Just a few days into 2018, security researchers have published a number of extremely serious hardware vulnerabilities that affect virtually all systems with Intel, AMD or ARM processors. As operating system vendors rush to fix the problem in software, the developers of popular internet browsers have also been busy – there have been announcements from all major players:

Browsers have implemented changes to their JavaScript engines to make it harder to exploit these vulnerabilities – and these changes can break your software. Fortunately, the changes are the same on all three platforms. Additional countermeasures are to be expected with the ultimate goal to secure the browsers while keeping existing functionality intact. For now, the following temporary limitations have been put in place:

- The SharedArrayBuffer object has been disabled without replacement. This object has been introduced in ES2017, but the few web applications already using it will break with the next browser update. While disabling this functionality is supposed to be a temporary measure, there is no date set for its reintroduction. Significant redesign might be required to make existing applications relying on this feature compatible – lacking the shared buffer, web workers need to fall back to message passing for synchronization and data transfer.

- The resolution of the performance.now() timer has been reduced from 5 µs to 20 µs, but the method itself will continue to work. If your application relies on sub-millisecond timings, this might be a problem for you; otherwise, you can keep using the timer as usual.

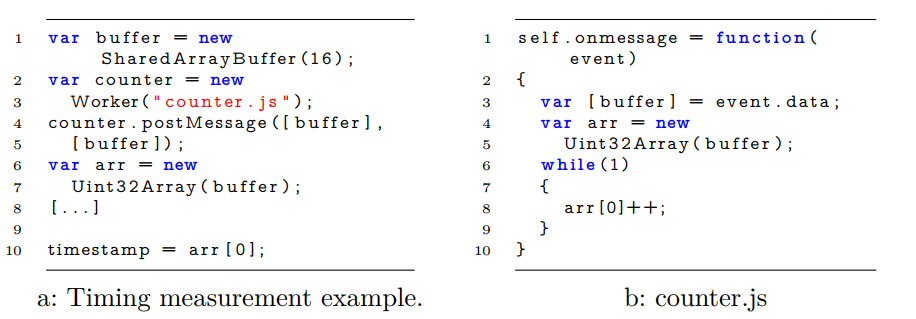

But why were these limitations introduced and how do they help mitigate the vulnerability? Successful exploitation relies heavily on having a precise way to measure the time it takes to perform certain operations – the higher the resolution of the timer, the quicker the attack can be mounted. This explains why he precision of the performance timer was reduced, but what about SharedArrayBuffer? It turns out that it has been proposed and proven that there is a way to create a nanosecond precision timer using Web Workers and SharedArrayBuffer. By taking advantage of these relatively new JavaScript features, a counter can be continuously incremented on a separate thread creating a free-running timer which provides precision beyond what is offered by standard performance counters.

Using SharedArrayBuffer to build a precise timer [see link for full paper above]

By disabling these features, browsers made it effectively impossible to measure time beyond a certain precision. This is just a stop-gap measure, and since attackers might come up with new ways to take advantage of the underlying vulnerability, in the upcoming months browser developers will be working on ways to prevent a successful exploit from extracting meaningful data even if precise timing functions are available.

The Chromium team also has a few recommendations for all web developers to limit the impact of these (and any related future) vulnerabilities. These best practices help keep sensitive data (like credentials and tokens) outside the memory of the renderer process – as the process might be shared between pages, malicious scripts can use the hardware vulnerabilities to read data from parts of that memory that would otherwise be inaccessible to them.

- Prevent cookies from being loaded into the memory of the renderer using options present in the Set-Cookie header.

- Mark cookies that should only ever be sent by first-party requests as strict SameSite – these cookies will only be sent when the origin of the request is from the same site as the target URL.

- Mark cookies that are not accessed by JavaScript code as HTTPOnly. These cookies will still be sent with HTTP requests but won’t be accessible from JavaScript.

- For cookies that cannot be marked HTTPOnly, make sure to only access the cookie variable on pages where and when it is explicitly required. Cookies are only loaded into the renderer right before the first access to this variable.

- Make it hard to guess and access the URL of pages that contain sensitive information. All API endpoints that return sensitive data using GET requests need to be protected. If the URL is known to the attacker, the renderer might be forced to load it into its memory. Same-origin policies alone do not protect against these attacks.

- Use random, unique, expiring URLs that are hard to guess and have no discernible pattern that an attacker can learn.

- Deploy and require anti-CSRF tokens for all requests to API endpoints.

- Place and validate the presence and content of SameSite cookies to ensure that the request comes from a first-party site.

- Ensure that all responses are returned with the correct MIME type, and that the content type is marked as nosniff. This will prevent the browser from reinterpreting the contents of the response, and can prevent it from being loaded into the memory of the renderer when a malicious site tries to load it in certain ways. This works great in conjunction with the Site Isolation feature of Chrome, which can separate the different pages by origin into different renderer processes.

These best practices have been around for a while now, but their importance has just skyrocketed as the fundamental assumptions underlying the security model of the data stored in browser memory are being challenged. In general, learning and following industry standard secure coding best practices play an important role in protecting against current and future threats, even when such threats originate from outside the traditional domain of web applications.

To learn more about secure web development, check out our courses here.